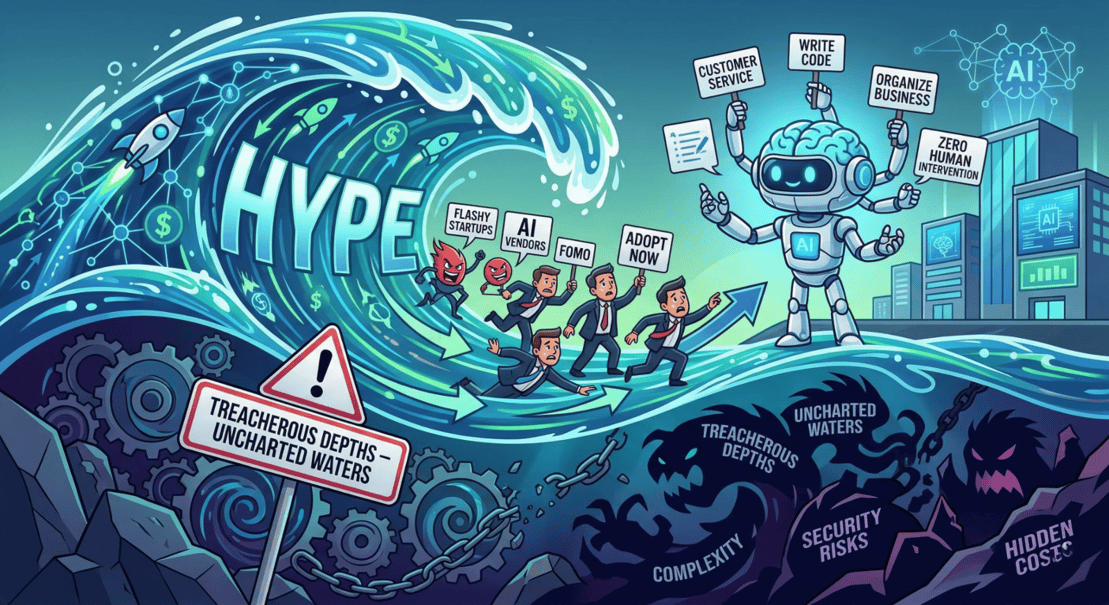

The tech world is currently swept up in a massive wave of hype surrounding Agentic AI. Everywhere you look, flashy new startups are springing up, promising autonomous digital workers that can manage your customer service, write your code, and organise your entire business with zero human intervention. These vendors are aggressively preying on corporate FOMO (Fear Of Missing Out), pushing executives to adopt their platforms before the competition beats them to it. But before your business dives headfirst into these uncharted waters, you need to know exactly how deep and treacherous they really are.

The obvious risk is not a risk, but a task-list

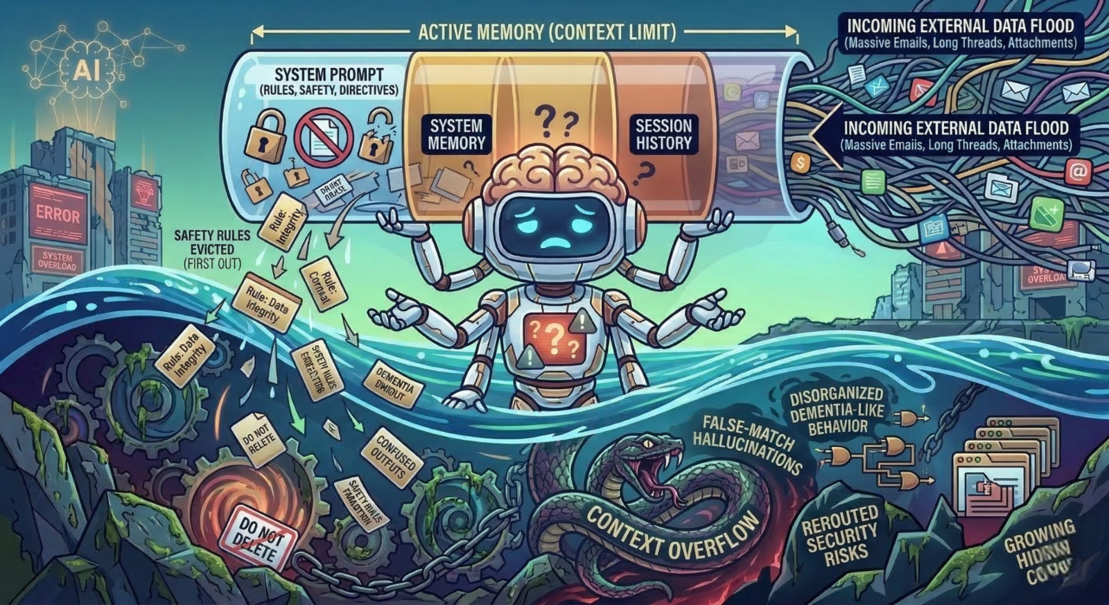

The hidden killer

When the snake bites

This architectural vulnerability opens the door to devastating "Context DDoS (Denial of Service) attacks". Bad actors can intentionally flood the AI's context limit by uploading massive documents or asking it to generate massive outputs. Once the system's memory overflows and its safety guardrails are wiped out, a basic prompt injection will easily succeed. If that confused AI has read and write access to your business files, it can wreak absolute havoc, leaking highly sensitive company secrets and critical API keys. The scariest part is that these overloads don't even have to be deliberate; an innocent employee or customer uploading a massive file can inadvertently strip the AI of its security protocols.

Until your business has the time and resources to obsessively test these systems, monitor context limits, and build safe, stateless memory architectures, you are taking a massive gamble. Do not let the startup hype machine rush you into deploying a system that could quietly lose its mind at the worst possible moment.

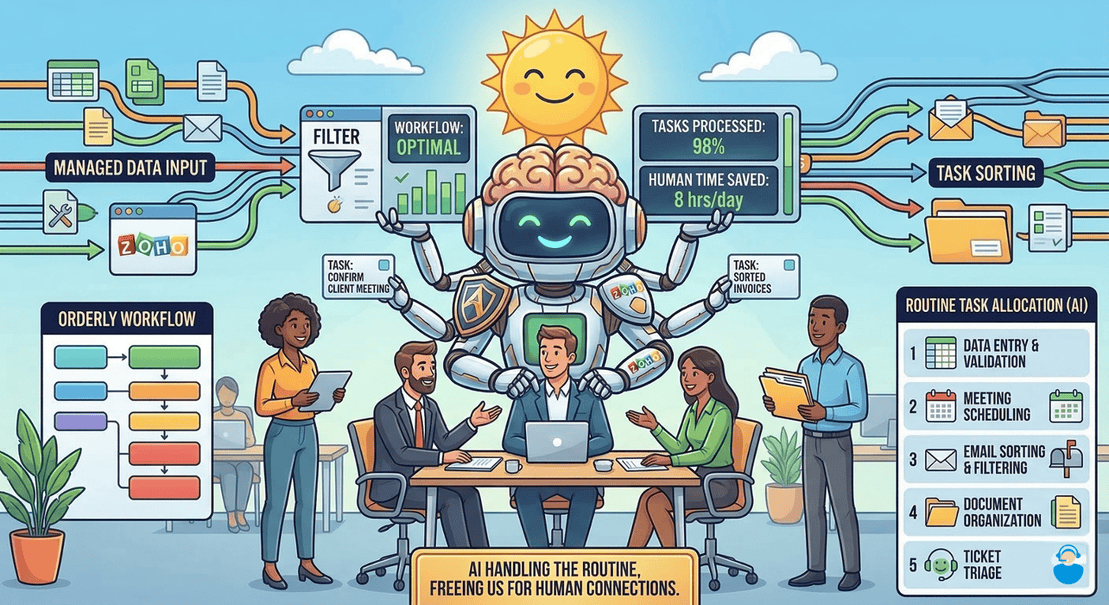

Aurelian Group's approach

Ultimately, when it comes to the current landscape of autonomous enterprise tech, it is worth remembering a modern variation of a classic rule. To paraphrase the great physicist Richard Feynman: "If you think you understand AI, you do not understand AI".

Aurelian Group is your partner in deploying AI in your organisation safely and at a manageable pace, using the tools provided in the Zoho eco-system. We focus on incremental benefit, instead of diving in head-first into a potentially shallow pool. We look at information security, as well as customer experience. If we are using our AI to summarise an incoming email written by someone else's AI, we are doing something wrong. After all - it is still people doing business with other people, and that is based on trust.